Most organisations say the right things about responsible AI now. The language is polished. The principles are laminated. The slide decks are tidy.

Then someone tries to ship something.

That is where responsible AI stops being an idea and becomes a test of organisational maturity. Not maturity as in messaging. Maturity as in: can you make decisions under pressure, with real accountability, without creating a maze so complex that nothing gets done?

When I spoke with Aurelie Jacquet — a globally recognised leader in responsible AI governance, standards, and assurance — the conversation kept circling back to that question. Aurelie has spent the last nine years in the work: not theorising the ethics of AI from a distance, but helping large organisations and governments translate “trustworthy AI” from principles into decision rights, controls, evidence, and practice that builders can actually use.

And if there was one theme that came through clearly, it was this: responsible AI is not a new religion. It is delivery discipline.

Responsible AI’s first mistake: treating AI like it lives in its own world

Early in the conversation, Aurelie described three principles that have shaped the way they think about responsible AI in practice. The first landed like a corrective.

“You do not reinvent the wheel for AI. You need to build on existing practices.”

It is a simple sentence, but it explains a lot of failure. A surprising number of organisations still try to manage AI risk as if it is separate from everything else: separate from product governance, separate from enterprise risk, separate from privacy and security, separate from procurement, separate from delivery.

That separation feels neat. It also guarantees you will stall.

Aurelie’s point was that if AI is powering services, then it belongs inside the existing systems that already govern services. If you create a parallel responsible AI regime, teams will spend their time translating between two operating systems. Worse, they will bypass it when deadlines arrive.

The deeper idea here is not “reuse existing controls” as a cost-saving trick. It is that accountability does not move simply because the technology changed. The organisation is still accountable. The business is still accountable. The duty to manage risk and deliver safely does not relocate to a new committee because the word “AI” is involved.

That is also why Aurelie was sceptical of the constant relabelling we do in this space. Algorithms, AI, generative AI, agentic AI, frontier models — the surface changes fast, but many of the underlying risk categories recur: privacy, IP, security, fairness, transparency, governance. The job is to keep improving how those risks are handled as systems become more powerful and more dynamic.

The second mistake: writing principles you cannot apply

Aurelie’s second shaping principle is where the “interesting” part begins — because it goes straight at the gap between executive intent and operational reality.

The policy documents are not the hard part. The hard part is the use case.

Aurelie put it plainly: high-level policy and strategy can be “very nice”, but if you cannot tailor it to the use case level, “they’re not worth the piece of paper they’ve been written on.”

Anyone who has sat in a project room knows what that means in practice. Teams do not need another statement about fairness. They need to know:

- What does fairness mean here, with this data, in this context?

- What evidence do we need to show before we go live?

- Who is allowed to decide we can proceed?

- Who is allowed to stop the system if something feels wrong?

Responsible AI becomes real when it answers those questions without making delivery impossible.

And this is where Aurelie’s third principle matters. It is not enough to be “responsible” in concept. The practices have to match how builders build.

Aurelie described it as developing practices that are agile — “by design” if you prefer — and actually map to builders’ practices. One of the first questions Aurelie remembers getting, back in 2018, was from teams asking: “How do you do fairness?” The answer was not a compliance checklist. It was starting from the beginning and working through what fairness meant in that team’s context, and how it could be embedded into the work rather than bolted on later.

This is where it gets real: retrofitting trust is expensive. Sometimes it is impossible.

The first sign responsibility is real: risk appetite, not slogans

When I asked Aurelie what separates real responsible AI from messaging, the answer was not “more training” or “more awareness.” It was governance that can make decisions.

At the organisational level, Aurelie highlighted the importance of having your AI risk appetite in order. That is not an abstract exercise. It is a clear statement of what is within appetite and what is not.

Aurelie gave an example from earlier waves of AI adoption: some organisations assessed emotional recognition as not accurate enough and decided to ban it altogether. The point is not that every organisation must do the same. The point is that someone had enough clarity — and enough authority — to make the call.

Risk appetite is responsibility in its most practical form. It prevents teams being set up to fail. It stops you approving things you do not understand and then acting surprised later.

“Committee limbo”: the failure mode that looks like governance

Then Aurelie named a pattern that will be painfully familiar to many public sector leaders: when governance structures exist, but no one can actually decide.

If you do not have clear governance structures, Aurelie said, you get stuck in “committee lock” — a kind of limbo where a use case moves from privacy committee to security committee to risk committee and back again. Every group is trying to be careful. The overall effect is paralysis.

It is tempting to see this as the “price of responsibility.” Aurelie’s view is closer to the opposite: committee limbo is often the sign that accountability is not properly designed. It is the sign that decision rights are unclear, and trade-offs are not being managed in the right place.

And delay is not neutral. The longer it takes to ship responsibly, the more teams will experiment unsafely, informally, or in ways that drift outside the official line of sight. That is when trust gets damaged — not necessarily because a team was reckless, but because the system made “doing it right” feel impossible.

Accountability isn’t oversight. It is ownership.

When we moved into accountability, Aurelie started by separating two concepts that are often blended: accountability and oversight.

“There’s often a confusion between accountability and oversight… oversight helps for accountability, but effectively, even if it goes wrong, there’ll be an organisation accountable for it.”

This matters because some organisations respond to AI by creating more oversight structures, as if oversight is accountability. Oversight can help. But it does not replace ownership.

Aurelie’s “back to basic” view was clear: in most cases, accountability still sits with the business — the part of the organisation using the system to deliver services. If you shift that away and try to make someone else accountable, you make it harder to manage, because the accountable party is no longer the party that understands the service context.

What makes AI challenging, Aurelie argued, is not that accountability disappears. It is that AI creates stronger interdependencies across risk classes. Privacy depends on security. Security depends on data handling. Data handling depends on system design and monitoring. Risk classes have to work closer together — and someone has to be empowered to manage the trade-offs.

That is what “meaningful accountability” looks like in practice.

The minimum credible threshold: can a leader say the hard sentence?

One of the most useful parts of the conversation came when I asked a plain question: if an executive asked, “How do we know this is safe enough to deploy?”, what is the smallest credible set of checks?

Aurelie’s answer started with a sentence leaders should be able to say with confidence:

“I understand the risk and I can explain why AI is a better alternative and how it maintains its performance. It’s fit for purpose.”

That is not a rhetorical line. It is a standard of responsibility. If you cannot say it, you should not be approving deployment.

To get to that point, Aurelie emphasised a few non-negotiables: the ability to identify, manage, and monitor risk; the need for triage because there will be hundreds of use cases; and the reality that AI systems are dynamic, not static.

“You just can’t leave it in the wild,” Aurelie said, unless you want surprises.

Aurelie also made a distinction that’s worth repeating because it cuts through hype and fear at the same time:

“AI is not creating new risk. It is amplifying risk and creating new risk source.”

In other words, many risk categories are familiar — but AI changes the speed, scale, and pathways by which risk emerges. In security terms, for example, it is not only the data that needs protection. The model becomes an asset too. That is a meaningful shift.

And because risk management is never perfect, incident management matters. Aurelie referenced an algorithmic trading background, where teams assumed change could happen fast and planned accordingly. AI needs that same posture: a disciplined approach to detection, response, and learning.

Data governance stops being a blocker when you realise what agents will do

As we moved toward data governance, Aurelie framed the stakes in a way that felt very “2026.”

With agentic AI rising, data quality management and access control are no longer just best practice. They are a defensive posture.

If organisations do not manage and protect data assets, Aurelie warned, agents will collect and use that data and “you’re giving it for free.” There’s a trust implication there, but also a strategic one: assets that are not protected are assets that do not compound value for the organisation.

This is where responsible AI intersects directly with trust — not trust as a brand attribute, but trust as the condition for scale.

Standards can help — but only if they make delivery easier, not slower

Finally, we spoke about standards and certification — including ISO 42001 — and what separates something genuinely useful from compliance theatre.

Aurelie’s argument was that standards are valuable when they define clear baseline controls — “the must do” — without trying to prescribe every step. They provide repeatable structure that organisations can embed into their own operating model.

But the differentiator is still practice. The organisations that scale responsibly are the ones that align adoption leadership with risk leadership — not as a box-ticking exercise, but so the organisation can move beyond proof-of-concept into durable capability.

The big idea I took away from Aurelie is that responsible AI is not about saying the right principles. It is about building the kind of organisation that can hold two things at once: speed and scrutiny.

That maps cleanly to what we mean by Government 3.0: connected government that is citizen-centred by design, and capable of continuous improvement — with trust engineered into delivery rather than retrofitted after the fact.

Because in the end, responsible AI is not the work of a policy. It is the work of a system.

And you can tell when it is real, because it ships.

Published by

About our partner

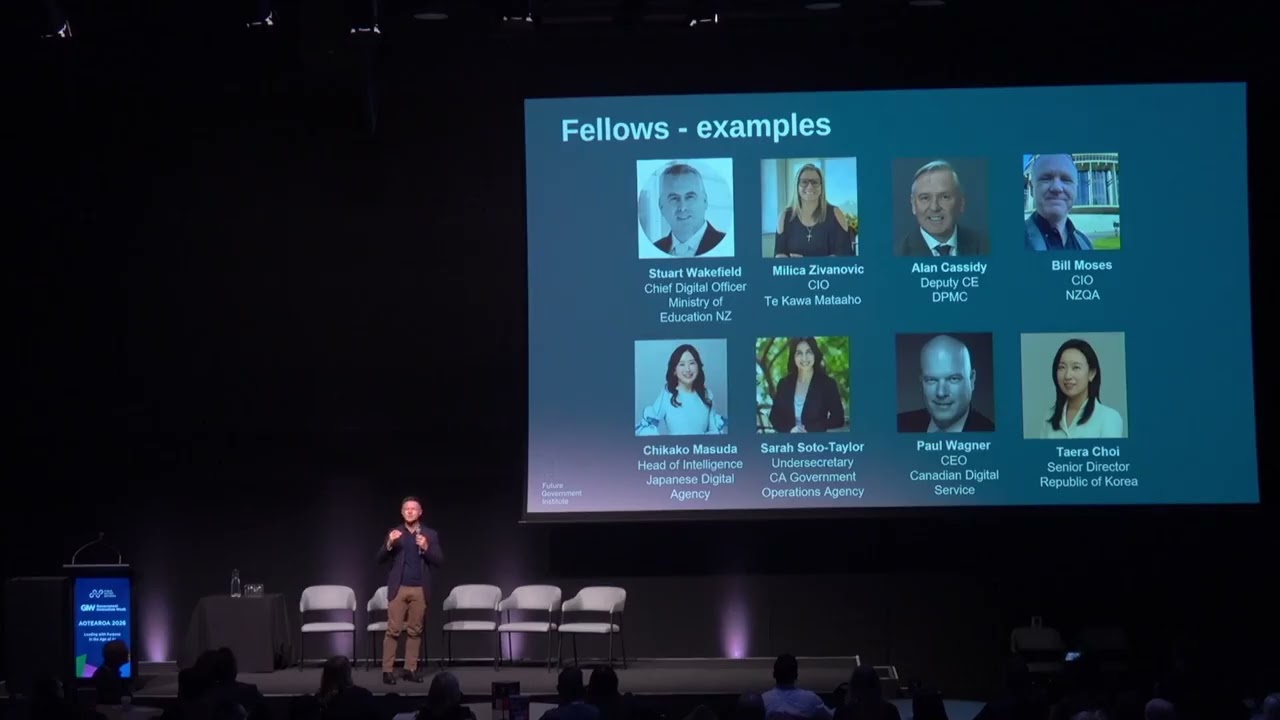

Future Government Institute (FGI)

We are the independent global institute that assesses, benchmarks, and builds digital government capability. Supported by 250+ Fellows from 40+ countries, the Future Government Institute provides the FGX Rating System, the world's first independent rating for government digital capability, showing where organisations sit from G0 to G4.Through FGX Assessments, Communities of Practice, G3 Ready certification, and the FGX Capability Platform, we connect practitioners worldwide to build Government 3.0 capability together. Founded by The Hon. Victor Dominello, the Institute is the movement from Government 2.0 to Government 3.0, and ready for Government 4.0.GX Assessments provide independent, evidence-based measurement of digital government capability from G0 to G4. Whether you're assessing individual practitioner capability or organisational readiness, FGX shows exactly where you stand and provides a clear pathway to G3 Ready certification, the global standard for Government 3.0 capability.Take the assessment here.

Learn moreHelp your peers

Share what you've learned with fellow public servants